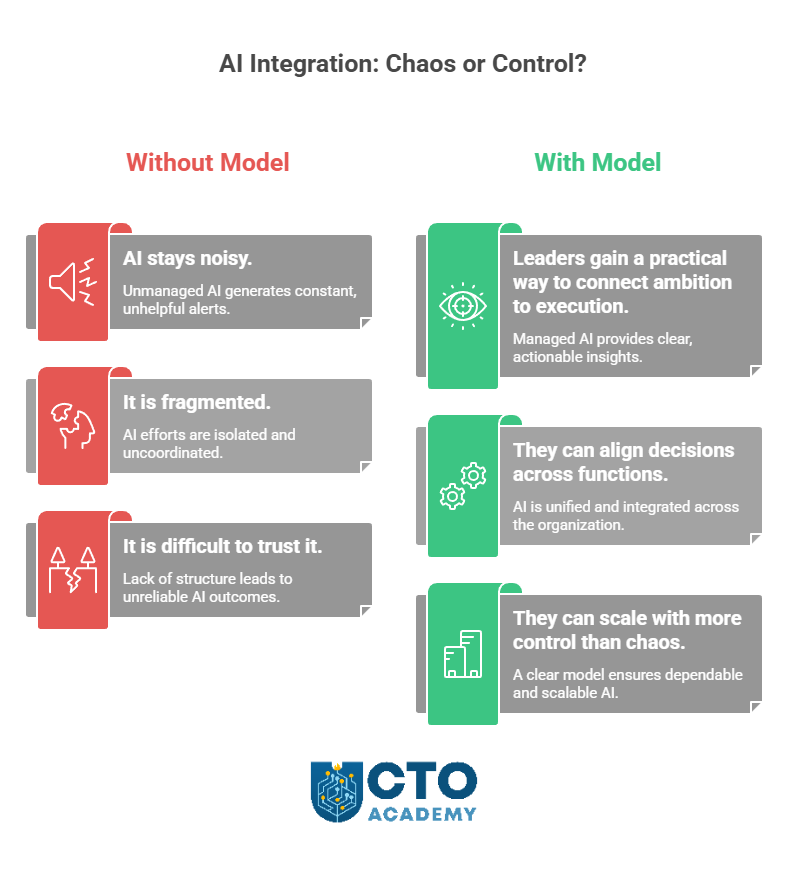

The reality is that AI is everywhere in the board narrative, but often nowhere in the operating model. The result? Programs look busy, roadmaps look ambitious, and reporting looks active, yet accountability remains thin. Nobody is fully sure which use cases should scale, who owns the decision, or what “production-ready” means. In fact, orgs don’t really know how to run it inside the business in a way that is governed, useful, and repeatable.

So, the real bottleneck is operating practice because leaders failed to implement an AI operating model in time or at all.

What follows is a practical framework for getting that control back. This guide will help you separate signal from noise, identify why so many AI efforts stall between pilot and production, and put a more usable structure around decisions, ownership, risk, and delivery. Rather than offering another high-level strategy view, it will give you a field-ready operating model with roadmaps you can use to assess what should scale, what should pause, and what needs redesign before more investment goes in.

TL;DR

- AI is not failing because of a lack of ambition. It is failing because many organizations still lack a usable operating model.

- The real gap is between pilot activity and accountable production: teams experiment, but ownership, decision rights, and scale criteria remain unclear.

- A strong AI operating model defines six essentials: ownership, readiness, governance, rollout, monitoring, and executive review.

- This helps leaders decide what should scale, what should pause, and what needs redesign before more time and budget are committed.

- The goal is simple: turn AI from scattered experimentation into governed, useful, repeatable delivery.

Table of Contents

Pilot vs Production

This is where many teams get stuck: they treat pilot activity and production readiness as if they were only a few steps apart. In practice, they are operating under different standards entirely, as Table 1 below clearly shows.

Table 1: Pilot vs production-what changes when AI becomes accountable

| Area | Pilot mode | Production mode |

| Primary goal | Explore potential and test whether the use case is worth pursuing | Deliver reliable value in a live business environment |

| Ownership | Interest is shared across teams, but accountability is often still loose | A named business owner and delivery owner are clearly accountable |

| Success criteria | Early signals, directional feedback, and rough promise | Defined outcomes, measurable KPIs, and agreed thresholds for success |

| Decision-making | Informal, fast-moving, and often dependent on sponsor enthusiasm | Structured, documented, and tied to clear decision rights |

| Risk review | Partial, delayed, or handled in parallel with experimentation | Built into the operating path before broader rollout |

| Security and compliance | Considered when concerns become visible | Addressed as a standard requirement before scale |

| Workflow integration | Tested in limited or artificial conditions | Proven inside real workflows, systems, and user behavior |

| User adoption | Interest is assumed or lightly tested | Adoption, training, support, and behavior change are actively managed |

| Monitoring | Limited oversight during testing | Active monitoring for performance, misuse, drift, and exceptions |

| Incident response | Issues are handled informally by the project team | Clear escalation, response ownership, and rollback procedures are in place |

| Funding logic | Small-scale, experimental, and easy to justify informally | Supported by a clearer business case, operating cost view, and resourcing plan |

| Executive visibility | Reported as activity or innovation progress | Reported as portfolio progress, risk position, and decisions required |

The Cost of Staying in the Pilot Mode Too Long

- Weaker leadership credibility due to slower execution (i.e., teams become busy maintaining optionality instead of making decisions).

- Rising confusion about where value is actually being created (i.e., executives hear progress updates, but still cannot see which use cases deserve investment, which should stop, and who owns the final call).

- If there are parallel pilots alive, attention consumption is rising while confidence is falling.

Pilot theater is not just a tooling problem. It is a leadership problem.

Download the AI Integration Blueprint

Move beyond pilots and integrate Gen AI into core systems, without losing control of cost, security, or compliance. Get the practical roadmap tech leaders use to modernize infrastructure, prioritize the right use cases, and set governance that scales.

Downloading the blueprint does not automatically subscribe you to our bi-weekly Technology Leadership Newsletter.

The Underlying Purpose of an AI Operating Model

It is, effectively, the translation layer between ambition (pilot) and accountable delivery (production). In other words, an operating model turns broad goals into repeatable operating practice by defining three things:

- What sits where

- Who decides what

- How progress becomes governable

6 Components of an AI Operating Model

Table 2: Six components of the AI operating model and questions they answer

| Component | Core question it answers | Best practice |

| Ownership and decision rights | Who owns the decision? | Assign a named business owner, a named delivery owner, and a clear escalation path for every use case. |

| Readiness and use-case selection | What is ready to move forward? | Define the problem, measurable value, workflow fit, data availability, manageable risk, and a shared definition of production-ready. |

| Governance and risk controls | What must be reviewed and controlled? | Build risk into the operating path early, with clear review points, evidence requirements, and escalation rules. |

| Delivery and rollout sequencing | How does work move into production? | Use a staged rollout path: test in a bounded setting, validate value, confirm controls, integrate into workflow, and scale deliberately. |

| Incident response and monitoring | How do we manage issues after launch? | Monitor performance, exceptions, and misuse actively, with clear response ownership and rollback authority. |

| Executive communication and review cadence | How does leadership stay informed and accountable? | Run regular portfolio reviews covering progress, risk, readiness, ownership, and the decisions leadership must make next. |

Taken together, these six components form a usable operating model because they answer all six questions leaders keep running into. That is what turns AI from scattered experimentation into accountable delivery.

Where Most Tech Leaders Get Stuck

A common pattern looks like this:

A product team wants to move a promising AI feature forward because early testing looks strong and executive interest is high. Security pushes back because the controls, data boundaries, or review steps are still unclear. Engineering is already partway into implementation. Data is being asked for support. The meetings multiply, but the decision does not get better.

So here, we have a perfect storm:

- Unclear ownership (across product, engineering, data, and security)

- Pilots without scaling criteria

- Risk review arrives too late

- No shared definition of acceptable value or acceptable risk

- Executive pressure without operating clarity

This is all avoidable if we implement an AI operating model in time.

Practical AI Operating Model (for technology leaders)

The model’s structure should answer these four questions:

- Who sets direction?

- Who executes?

- Where does a cross-functional review happen?

- How does executive oversight remain focused on the right decisions?

Then, it should define core dependencies, as described in Table 3:

Table 3: AI operating model with responsibilities, ownership, decision rights, and review cadence.

| Responsibility area | Primary owner | Decision rights | Review cadence |

| Priorities and risk appetite | Leadership team | Set strategic priorities, funding intent, and acceptable risk thresholds | Monthly or quarterly |

| Execution and workflow integration | Product and delivery teams | Build, test, implement, and improve approved use cases | Weekly |

| Security, privacy, legal, and procurement review | Cross-functional review group | Approve, conditionally approve, escalate, or stop based on control requirements | At key stage gates |

| Portfolio visibility and go/no-go oversight | Executive sponsors | Reallocate resources, remove blockers, and make scale, pause, or stop decisions | Monthly |

6 Templates That Make the Model Usable

For an AI operating model to evolve beyond a leadership idea into a working management system, you will need six templates.

AI Readiness Scorecard

- Helps teams decide whether a promising use case is actually ready for controlled rollout.

- Prevents teams from scaling enthusiasm ahead of evidence by forcing a practical review of workflow fit, data quality, risk exposure, ownership, and measurable value.

- Used after initial interest is established, but before a pilot is allowed to expand.

Here is an exemplary AI readiness scorecard you can use right now.

Table 4: AI readiness scorecard (example)

| Assessment area | What to check | Key question | Score (1–5) | Red flags if weak |

| Problem clarity | The business problem is specific, understood, and worth solving | Is the use case tied to a real operational or commercial problem? | Vague objective, novelty-led use case, no clear pain point | |

| Strategic relevance | The use case supports a current business priority | Does this initiative clearly connect to a strategic goal or measurable priority? | Interesting idea, but weak executive relevance | |

| Value case | Expected value is defined in practical terms | Can the team describe the expected gain in cost, speed, quality, revenue, or risk reduction? | Benefits are assumed, not quantified | |

| Success criteria | Clear outcomes and KPIs are agreed upon upfront | Do we know how success will be measured during the pilot and after rollout? | No baseline, no agreed KPIs, no threshold for scale | |

| Ownership | Accountability is explicit across business and delivery | Is there a named business owner and a named delivery owner? | Shared interest but no final owner | |

| Decision rights | Approval and escalation paths are defined | Do we know who can approve, pause, escalate, or stop the initiative? | Too many stakeholders, no final call | |

| User workflow fit | The use case fits real work, not just a technical demo | Will this improve an existing workflow that people actually use? | Impressive output, weak day-to-day adoption case | |

| User adoption readiness | Change, training, and team adoption have been considered | Are users likely to trust, adopt, and use the solution consistently? | No training plan, unclear user behavior impact | |

| Data readiness | The required data is available, accessible, and usable | Do we have the right data quality, structure, permissions, and lineage? | Poor data quality, access gaps, unclear provenance | |

| Technical feasibility | Integration and engineering complexity are understood | Can this be implemented within the current architecture and tooling? | Demo works in isolation, but not in the production stack | |

| Security readiness | Security review requirements are known and manageable | Have data handling, access control, and exposure risks been assessed? | Sensitive data risk, unresolved access concerns | |

| Privacy and legal readiness | Privacy, regulatory, and contractual implications are understood | Are there any privacy, compliance, IP, or legal blockers? | Legal review not started, unclear data rights | |

| Model risk | Reliability, explainability, and failure modes are understood | Do we understand accuracy limits, hallucination risk, and edge cases? | Model behavior not tested in realistic conditions | |

| Operational controls | Monitoring, incident handling, and rollback plans exist | If this fails, drifts, or causes harm, do we know what happens next? | No monitoring owner, no rollback path | |

| Vendor readiness | Third-party tools have been properly assessed | If a vendor is involved, have security, commercial, and support checks been completed? | Vendor selected on demo strength alone | |

| Delivery capacity | The team has the people and time to execute | Do we have sufficient product, engineering, data, and governance capacity? | Pilot approved without delivery bandwidth | |

| Production readiness | The team has defined what “ready to scale” means | Are the technical, operational, and control thresholds for rollout explicit? | Pilot continues with no scale gate | |

| Executive visibility | Leadership can review progress and unblock decisions | Is this use case visible in the right governance and reporting cadence? | Work is active but not decision-visible |

Suggested scoring guide

| Score | Meaning |

| 1 | Not in place |

| 2 | Major gaps |

| 3 | Partially ready |

| 4 | Mostly ready |

| 5 | Ready with confidence |

Table 5: Suggested interpretation of the scorecard

| Total readiness result | Meaning | Recommended action |

| 75–90 | Strong readiness | Proceed to controlled rollout |

| 55–74 | Moderate readiness | Proceed only with targeted gap closure |

| 35–54 | Weak readiness | Keep in pilot or redesign |

| Below 35 | Low readiness | Do not scale |

Optional decision rule

You can also add a simple gate beneath the table:

- No use case should scale if Ownership, Success criteria, Security readiness, Privacy and legal readiness, or Production readiness scores below 3.

- Any category scored 1 requires explicit review before more investment is approved.

A concise label for the box could be: “Ready to scale, or only ready to discuss?”

Read also: AI Feature Readiness Check – Knowing When to Integrate an AI Capability

AI Risk Register

- Helps leaders decide which risks are known, who owns them, and what must be monitored or mitigated before scale.

- Best used from the start of delivery to prevent late surprises, duplicated review, and the dangerous assumption that risk sits only with security or legal.

Table 6: AI risk register (example)

| Risk area | What the risk looks like in practice | Why it matters | Primary owner | What good control looks like |

| Data privacy | Sensitive data is entered into an AI workflow without approved handling rules | Privacy exposure can quickly become a legal, customer, and trust issue | Security/Privacy | Clear data-use rules, approved environments, and privacy review before rollout |

| Security exposure | Prompts, outputs, or integrations create a path for data leakage or unauthorized access | A promising use case can become a security incident if controls arrive too late | Security | Access controls, environment isolation, output filtering, and pre-launch testing |

| Output reliability | The model produces inaccurate, inconsistent, or misleading responses | Weak reliability undermines trust and can create real operational damage | Product/Delivery | Testing against real scenarios, human review where needed, and agreed quality thresholds |

| Bias and fairness | Outputs create uneven or unfair outcomes across users, groups, or decisions | This can create ethical, reputational, and regulatory risk at the same time | Product/Risk/Legal | Fairness testing, sensitive-use-case review, and defined escalation if concerns appear |

| Legal or regulatory exposure | The use case conflicts with compliance obligations, sector rules, or contractual terms | AI can move faster than policy, but the business still carries the accountability | Legal/Compliance | Early legal review, clear usage boundaries, and documented approval for sensitive cases |

| Vendor dependency | The solution depends too heavily on a third party’s model, pricing, uptime, or roadmap | A strong pilot can still create lock-in, cost shocks, or control gaps later | Procurement/Architecture | Vendor due diligence, fallback options, and clear contract and exit terms |

| Integration failure | The tool works in demo conditions but struggles inside live systems and workflows | Pilot success means little if the workflow cannot support production use | Engineering/Delivery | Real workflow testing, staged rollout, and clear integration checkpoints |

| Ownership ambiguity | Product, engineering, data, and security are all involved, but nobody owns the final call | Shared involvement without clear accountability slows decisions and weakens trust | Executive sponsor | Named business owner, named delivery owner, and explicit decision rights |

| Monitoring gap | A use case goes live without performance tracking, alerting, or rollback planning | Launch is not the finish line; unmanaged drift and misuse create avoidable risk | Operations/Delivery | Monitoring, incident triggers, response ownership, and rollback procedures |

| Low adoption or misuse | Users ignore, bypass, or misuse the AI capability in real work | Even technically sound solutions fail if teams do not trust or use them well | Product/Change lead | Training, workflow guidance, user feedback loops, and adoption monitoring |

| Cost creep | Usage scales faster than expected and erodes the business case | AI value can disappear quickly if cost control is weak | Product/Finance | Spend thresholds, usage monitoring, and regular commercial review |

| Reputation risk | Poor outputs or public-facing failures damage confidence internally or externally | One visible failure can outweigh several quiet successes | Communications/Product/Risk | Restricted rollout, clear safeguards, and prepared incident communication |

How to use the register

This kind of register works best when used as a live leadership tool, not a compliance document. It should help teams answer four practical questions:

- What could go wrong?

- Who owns it?

- What controls are in place?

- When should leadership intervene?

A simple way to use it:

- Review it before a pilot is approved.

- Revisit it before broader rollout.

- Bring it into executive reviews when scale, pause, or stop decisions are being made.

Pilot Selection Criteria

- Help leaders decide which use cases deserve time, budget, and executive attention.

- Prevent random experimentation, political prioritization, and weak use cases surviving on visibility alone.

- They should be used before the pilot portfolio gets crowded.

Table 7: Evaluation criteria

| Selection area | What leaders should test | Why it matters | What good looks like |

| Business problem | Is the use case tied to a specific operational, commercial, or customer problem? | Prevents pilots from being built on novelty rather than need | Clear problem statement with visible relevance to the business |

| Strategic relevance | Does the use case support a current priority or meaningful leadership objective? | Keeps the pilot activity connected to the actual direction | Clear link to a business goal, priority, or measurable pressure point |

| Value potential | Is there a plausible case for value if the pilot succeeds? | Avoids spending time on use cases with weak upside | Expected gain is described in terms of cost, speed, quality, revenue, or risk |

| Workflow fit | Will this improve a real workflow used by real teams or customers? | Separates practical use cases from impressive demos | Strong fit to day-to-day work, with identifiable users and usage context |

| User needs and adoption | Are users likely to trust, adopt, and benefit from it? | Technically strong pilots still fail if adoption is weak | Clear user case, likely demand, and basic change implications understood |

| Data readiness | Is the required data available, usable, and appropriately governed? | Weak data quickly undermines pilot quality and credibility | Data sources, access, quality, and permissions are broadly understood |

| Technical feasibility | Can the use case be delivered within the current architecture and capacity? | Prevents pilots that succeed in isolation but fail in production reality | Integration path is credible, and engineering effort is manageable |

| Risk exposure | Are key security, privacy, legal, reliability, and reputational risks visible? | Reduces the chance of late-stage objections or unsafe momentum | Main risks are known, and none appear unmanageable for the pilot scope |

| Ownership | Is there a named business owner and delivery owner? | Shared enthusiasm is not the same as accountability | Clear ownership of outcomes, execution, and escalation |

| Decision path | Do we know who can approve, pause, redirect, or stop the pilot? | Prevents drift and weak governance | Decision rights and review path are explicit |

| Delivery capacity | Does the team have the people and time to run the pilot properly? | Too many pilots fail because they are under-supported | Delivery, data, and governance capacity are sufficient for the proposed scope |

| Path to production | If the pilot works, is there a realistic next step? | Helps leaders back use cases that could actually scale | Clear view of what rollout would require and what gates sit ahead |

You can use scores (1-3) for each criterion. In that case, everything above 30 is a strong candidate.

Board or Executive Update

- A good AI update should help leadership review progress, risk, resourcing, and the decisions required to move forward.

- The aim is not to show everything that is happening, but to show what matters most at the decision level.

Table 8: Suggested executive update structure

| Update area | What leadership needs to see | Why it matters | What good looks like |

| Portfolio summary | A concise view of active AI initiatives by stage: exploration, pilot, controlled rollout, scale | Gives executives a clean picture of where effort is concentrated | A simple portfolio view with clear stage definitions and no inflated reporting |

| Business value | What each priority initiative is expected to improve in cost, speed, quality, revenue, or risk reduction | Keeps the conversation tied to business outcomes rather than technical motion | Value stated clearly, with baseline and target where possible |

| Progress since last review | What has moved forward, what has stalled, and what has changed materially | Helps leaders track momentum without getting lost in detail | A short narrative focused on movement, not task lists |

| Risk position | The most material active risks across privacy, security, legal, adoption, vendor, and delivery | Makes risk part of the operating conversation, not a separate escalation later | Top risks summarized with ownership, mitigation status, and escalation threshold |

| Decisions required | The approvals, tradeoffs, or interventions needed from leadership now | Prevents updates from becoming passive status meetings | Specific decisions clearly framed with options and implications |

| Resourcing and capacity | Where delivery capacity, funding, or specialist support is constraining progress | Shows whether the portfolio is realistically supported | Clear view of bottlenecks, not vague references to bandwidth |

| Readiness to scale | Which initiatives are ready to move forward, which should remain in pilot, and which should stop | Brings discipline to go/no-go visibility | Readiness assessed against explicit criteria, not enthusiasm |

| Cross-functional alignment | Whether product, engineering, data, security, legal, and procurement are aligned | Exposes where friction is structural, not personal | Alignment issues stated plainly, with the owner and next action |

| Incidents or exceptions | Any major failures, policy breaches, quality issues, or unexpected operational problems | Reinforces that oversight includes live accountability, not just pipeline optimism | Clear summary of issue, response, impact, and corrective action |

| Next-period priorities | The few actions or outcomes leadership should expect before the next review | Keeps the operating rhythm focused and forward-looking | Three to five priorities, each tied to an owner and a timeline |

Example executive editorial update format

You can also present the update in a simple editorial structure like this:

1. Current portfolio view

12 active initiatives: 4 in exploration, 5 in pilot, 2 in controlled rollout, 1 at scaled deployment.

2. What is progressing

Two customer-support use cases moved from pilot to controlled rollout after meeting readiness criteria on workflow fit, quality threshold, and security review.

3. What is blocked

One internal knowledge assistant remains in pilot due to unresolved data-access controls and unclear ownership of rollback decisions.

4. Top risks

The highest current risks are vendor dependency in one workflow, weak adoption in another, and late legal review on a third externally facing use case.

5. Decisions required from leadership

Approve additional delivery capacity for the two rollout candidates. Decide whether to pause the internal knowledge assistant until security ownership is clarified. Confirm risk appetite for external-facing generative use cases this quarter.

6. What happens next

Before the next review, the team will complete one vendor assessment, close two open control actions, and return with a go/no-go recommendation on three pilot-stage initiatives.

Cadence

For most organizations, this works best as a monthly executive review and a quarterly board-level summary, with the board version simplified to focus on portfolio value, top risks, resourcing pressure, and major decisions ahead.

Vendor Evaluation Checklist

AI vendors are quite skilled at showing what a tool can do in ideal conditions. The real question is whether the product fits your environment, controls, workflows, and commercial reality.

The following checklist (Table 9) gives leadership a more disciplined way to assess the situation before committing.

Table 9: Vendor evaluation checklist (example)

| Evaluation area | What leaders should test | Why it matters | What good looks like |

| Use-case fit | Does the product solve a defined business problem better than existing options? | A polished tool still creates noise if the use case is weak | Clear fit to a priority workflow, with an identifiable business outcome |

| Workflow integration | Can the tool work inside the systems, processes, and user behavior that already exist? | Many AI tools look strong in demo conditions but fail inside real operations | Proven compatibility with current workflows, systems, and team practices |

| Data handling | What data does the vendor access, store, retain, or use for model improvement? | Weak data controls can create privacy, security, and contractual risk | Clear data boundaries, retention policy, and customer control over sensitive data |

| Security posture | Are security controls, certifications, access models, and testing standards credible? | AI procurement often moves faster than control review | Transparent security documentation, strong access controls, and review readiness |

| Privacy and compliance | Can the product support your legal, regulatory, and policy obligations? | A tool can be technically useful and still commercially unusable | Clear compliance position, relevant certifications, and no unresolved policy conflicts |

| Model reliability | Are outputs consistent, explainable enough, and fit for the intended level of decision support? | Weak reliability erodes trust and creates operational risk | Tested performance in realistic scenarios, with known limitations stated clearly |

| Human oversight | Can users review, challenge, or override outputs where needed? | High-risk workflows need judgment, not blind automation | Clear review points, user visibility, and override capability |

| Implementation effort | How much integration, configuration, change work, and support effort is actually required? | Underestimated implementation cost is one of the fastest ways to kill value | Realistic implementation scope, named dependencies, and credible support plan |

| Vendor maturity | Is the vendor operationally stable enough to support long-term use? | A fast-moving market increases continuity risk | Evidence of customer support quality, roadmap clarity, and organizational stability |

| Commercial model | Do pricing, usage assumptions, and contract terms hold up under scale? | AI tools can look affordable until usage expands | Transparent pricing, sensible scale economics, and no hidden commercial traps |

| Interoperability and lock-in | Can you switch, extract data, or reduce dependency if priorities change? | Strong early performance can still create long-term lock-in | Open standards where possible, export paths, and clear exit terms |

| Monitoring and support | What happens after go-live if performance drops, incidents occur, or needs change? | Procurement should include the operating reality, not just the purchase moment | Defined support model, service expectations, escalation path, and change process |

You can also frame the checklist as a short set of practical questions (Table 10).

Table 10: Set of evaluation questions

| Question | What it helps prevent |

| Does this solve a real priority problem? | Buying for novelty rather than business value |

| Will it work in our actual workflow? | Demo success with no operational fit |

| Are the data and security controls acceptable? | Late-stage control objections and rework |

| Do we understand the legal and compliance position? | Procurement moving ahead of governance |

| Can users trust and challenge the outputs? | Over-reliance on weak or opaque outputs |

| What will implementation really require? | Hidden delivery cost and integration drag |

| Are the commercial terms still workable at scale? | Cost surprise after adoption grows |

| How easily could we exit or replace this vendor? | Lock-in without leverage |

Best practice and cadence

Use this checklist before vendor selection is finalized, and revisit it before rollout if the scope of the use case changes. In practice, it works best when product, engineering, security, procurement, and legal all review it together rather than in sequence. That makes tradeoffs visible earlier and reduces the chance of late-stage resistance.

Rollout Governance Model

The golden question here is:

What must be true before this use case moves further into the business?

The job of a rollout governance model is simple: define the checkpoints, decision rights, and control expectations that sit between early promise and scaled use.

In practice, this is what stops a pilot from becoming “live by drift.”

Table 11: Rollout governance model (example)

| Rollout stage | What the business is trying to prove | What must be true to move forward | Primary decision owners | What does this stage prevent |

| Exploration | The use case is relevant enough to investigate | The problem is clear, business value is plausible, and ownership is assigned | Business sponsor/Product lead | Time spent on novelty with no strategic case |

| Pilot | The use case can work in a bounded environment | Success criteria are defined, users are identified, risk review has started, delivery scope is realistic | Product/Delivery/Risk stakeholders | Pilots launched with no discipline or measurable outcome |

| Controlled rollout | The use case can operate safely in a live but limited setting | Workflow fit is proven, controls are in place, monitoring is active, rollback path exists | Product/Engineering/ Security/Legal as needed | Scaling something that works only in test conditions |

| Scale decision | The use case is ready for broader deployment | Value is evidenced, risk is acceptable, support model is ready, and executive visibility is in place | Executive sponsor/Leadership review | Moving to scale on momentum rather than evidence |

| Ongoing operation | The use case remains useful, safe, and governable over time | Performance is monitored, incidents are owned, review cadence is active, and changes are controlled | Operations/Product/Executive oversight | Treating launch as the end of governance |

But there is a more practical version leaders can use in a workshop or steering meeting (Table 12).

Table 12: Rollout governance checklist

| Checkpoint area | Key question | Why it matters | Ready/Not ready |

| Problem definition | Is the use case tied to a clear business problem worth solving? | Prevents rollout built on vague promise | |

| Ownership | Is there a named business owner and delivery owner? | Prevents shared interest from being mistaken for accountability | |

| Success criteria | Have we defined what success looks like in the pilot and at rollout? | Prevents decisions based on activity rather than evidence | |

| Workflow fit | Has the solution been tested in the real workflow it is meant to improve? | Prevents strong demos with weak operational fit | |

| Security review | Have security requirements been reviewed and addressed at the right stage? | Prevents late-stage objections and avoidable rework | |

| Privacy and legal review | Have privacy, legal, and compliance questions been resolved? | Prevents rollout ahead of governance | |

| Data readiness | Is the data usable, accessible, and governed appropriately? | Prevents scaling on weak inputs or unclear data rights | |

| Reliability threshold | Has the solution met an agreed quality or accuracy threshold? | Prevents rollout on inconsistent performance | |

| Human oversight | Is there clarity on where human review or override is required? | Prevents over-automation in sensitive workflows | |

| Monitoring | Are performance, misuse, and exceptions being tracked? | Prevents unmanaged drift after launch | |

| Incident response | Is there a clear owner and response path if something goes wrong? | Prevents confusion during failure or escalation | |

| Rollback readiness | Can the organization pause, limit, or reverse deployment if needed? | Prevents fragile launches with no exit path | |

| Support model | Are training, adoption, and operational support in place? | Prevents rollout that teams cannot sustain | |

| Executive visibility | Is this use case visible in the right review cadence with clear go/no-go ownership? | Prevents scale decisions from happening by inertia |

What Good Looks Like 90 Days After Implementing the AI Operating Model

Most organizations need 90 days to become more controlled. Current research shows that many companies are still active in AI but early in scaling it, and only a small minority describe themselves as truly mature.

In practical terms, this 90-day window starts when leadership begins using the model in the real business: decision rights are clearer, pilot selection is more disciplined, cross-functional review is active, and executive reporting follows a repeatable cadence.

Table 13: Post-implementation changes (after 90 days)

| What changes after 90 days | What that looks like in practice |

| Fewer random pilots | The portfolio is smaller, more deliberate, and easier to explain. Low-value experiments are easier to stop, and new ideas are screened against clearer readiness criteria before they absorb more time or budget. |

| Clearer ownership | There is less ambiguity across product, engineering, data, and security. Teams can name the business owner, the delivery owner, the review path, and the final decision-maker. |

| Faster go/no-go decisions | Decisions move with less circular debate because the criteria are clearer. Stronger use cases progress with fewer delays, while weaker pilots are paused earlier and with less friction. |

| Stronger board-level narrative | Executive updates become easier to govern because progress, risk, resourcing pressure, and decisions required are visible in the same conversation. That matters because boards are being asked to oversee AI more actively, even while many organizations are still building the structures to support that oversight. |

| Better balance between speed and control | Teams are still moving, but not by drift. Risk review happens earlier, scaling decisions are more deliberate, and the organization is less likely to confuse visible activity with operational readiness. That aligns with broader research showing the hard part of AI adoption is often not experimentation, but the systems and operating discipline needed to scale it. |

A Practical Roadmap for the First 12 Months

The first 90 days are about creating control. The roadmap below (Table 14) shows how that work typically unfolds from the moment leadership begins putting an AI operating model in place, through the first year of embedding it more consistently across the business.

Table 14: A 12-month roadmap

| Timeframe | What is happening at this stage | What good looks like in practice |

| 0–30 days | Leadership begins putting the model in place | Current pilots are visible, ownership starts to become clearer, key risk gaps are identified, and the first decision forums are established |

| 30–90 days | The first working version of the model goes live | Use-case selection criteria are in use, risk review is active, reporting cadence begins, and go/no-go checkpoints start shaping decisions |

| 3–6 months | The model starts becoming the default way of operating | AI work is approved, reviewed, and challenged through a clearer structure rather than through ad hoc discussions or executive pressure |

| 6–12 months | The model becomes more embedded across the portfolio | Templates are refined, governance becomes more consistent, and AI decisions are linked more clearly to budgeting, resourcing, and executive oversight |

Frequently Asked Questions (FAQ)

What is an AI operating model?

An AI operating model is the structure that helps an organization move from scattered experimentation to repeatable delivery. It clarifies who owns decisions, how work is governed, what controls must be in place, and how AI use cases move from pilot to scale.

Why do so many AI initiatives stall after the pilot stage?

Most organizations are still struggling to turn AI activity into a scaled business impact. The usual blockers are unclear ownership, weak governance, poor workflow integration, and an inability to connect experiments to measurable value.

Who should own AI in the business?

AI should not belong to a single function. Effective ownership usually combines business leadership, product and delivery teams, data and engineering, and risk functions such as security, legal, and compliance. What matters most is clear decision rights and named accountability.

How do we decide which AI use cases are worth scaling?

The strongest candidates solve a real business problem, fit an actual workflow, have usable data, meet control requirements, and show a credible path to measurable value. In other words, leaders should scale use cases based on readiness and business relevance, not novelty or executive excitement.

What kind of governance is needed to scale AI responsibly?

Organizations need practical governance, not performative. That usually means clear review points, defined risk thresholds, cross-functional oversight, and operating rules that support speed with control rather than slowing everything down by default.

What risks should be reviewed before rollout?

The most common risks include privacy, security, legal exposure, model reliability, bias, third-party dependency, and weak post-launch monitoring. These should be reviewed early, not after a use case is already gathering momentum.

How should leaders measure AI success?

AI success should be tied to business outcomes such as cost reduction, speed, quality, revenue impact, or risk reduction. Leaders also need evidence that the solution works reliably in live workflows, not just in a demo or isolated pilot.

What should boards and executives review regularly?

Boards and executive teams should focus on portfolio visibility, business value, risk exposure, readiness to scale, resourcing pressure, and the decisions that management needs to make next. Oversight works best when AI is treated as an operating and governance issue, not just an innovation update.

Conclusion

The teams that win with AI will not be the ones that try the most.

Selective scaling beats broad experimentation because it creates value rather than just visibility. It does so by relying on attention, decision quality, delivery capacity, and trust.

At the same time, leadership credibility depends on operating discipline. To put it bluntly, leaders must be able to explain what is being pursued, who owns it, how risk is being managed, and why a use case deserves to move forward. It is the ownership, readiness, governance, and executive accountability that make momentum usable.

The organizations that pull ahead will be the ones that know where AI belongs, what is ready to scale, and what should stop before more time and budget are consumed. That is the strongest case for building the model before expanding the portfolio.